According to the FDA, it “is responsible for protecting the public health by ensuring the safety, efficacy, and security of human and veterinary drugs, biological products, and medical devices; and by ensuring the safety of our nation’s food supply, cosmetics, and products that emit radiation.” Given this mission, the FDA should put the interest of public health ahead of other concerns, such as the profits of a pharmaceutical company. While many at the FDA are dedicated to this mission, federal agencies are routinely captured by industry. So, it is not surprising that the FDA has benefited companies at the expense of public health. Charles Seife wrote an article that appeared in the February 2018 issue of Scientific American. While there are legal issues here, my concern is with ethics

According to the FDA, it “is responsible for protecting the public health by ensuring the safety, efficacy, and security of human and veterinary drugs, biological products, and medical devices; and by ensuring the safety of our nation’s food supply, cosmetics, and products that emit radiation.” Given this mission, the FDA should put the interest of public health ahead of other concerns, such as the profits of a pharmaceutical company. While many at the FDA are dedicated to this mission, federal agencies are routinely captured by industry. So, it is not surprising that the FDA has benefited companies at the expense of public health. Charles Seife wrote an article that appeared in the February 2018 issue of Scientific American. While there are legal issues here, my concern is with ethics

On the face of it, the moral problem is easy to solve. As the FDA is tasked with protecting public health, its moral duty is to do that. Putting public health at risk to benefit a company or individual would be wrong. Part of the problem, as noted by Seife, is that the FDA is secretive, which makes it difficult for the public to know about the FDA and the products it approves. Another part of the problem, also noted by Seife, is that the FDA seems willing to allow research misconduct to remain unreported. Under the current administration, it seems likely that things will only get worse.

While it is tempting to see evidence of misdeed when drugs are recalled or given new warnings, it must be noted that this should be expected even when products are properly evaluated. This is because of how inductive reasoning used in product trials works. While inductive logic is essential, it has a fundamental problem that is called, shockingly enough, the problem of induction. Since an inductive argument’s conclusion always “leaps” beyond its premises, the conclusion of such an argument can always be false, even when all the premises are true. Since the controlled experiments of the trials are inductive, they can be properly conducted and still yield a false conclusion. These trials are then generalized to the entire population, which is another inductive argument and another chance for things to go wrong.

For example, even a large sample will not contain every genetic or physiological variation relevant to drug interactions. As such, a drug that was safe in the trials might have unexpected results out in the wild. So, one should not rush to judgment if an approved drug needs a warning label revision or has unexpected effects on some people. That said, the concern about how the FDA operates remains, as Seife’s research indicates. As such, the FDA seems to have acted wrongly by putting corporate interests ahead of public health. It remains to be seen what the future will bring, but even under traditional administrations, the FDA has engaged in bad behavior. If even this modest oversight is stripped away, things will become much worse.

An obvious solution is to make the FDA’s process and data available to the public. Under this solution, the public would have access to everything that occurs within the FDA as well as all the information provided to the FDA by the companies whose products are being evaluated. While this would solve the problems noted above, there are reasonable concerns about such complete transparency.

Allowing full public access to the FDA’s information would also allow the same access to competing pharmaceutical companies (and others with a financial interest in the data). Such transparency would allow access to a company’s trade secrets, commercial and financial information. This could cause “substantial competitive injury” and would be like playing poker while being forced to let everyone see your cards. Because of the potential harm, such full transparency would seem to be wrong.

It could be countered that all companies would be on equal footing, and no one would have an advantage. Going back to the poker analogy, if everyone must show their cards, no one has an advantage. The obvious problem is that foreign companies that do not undergo FDA approval would have access to the data and this could give them an edge against companies that sought FDA approval.

Another counter is to argue on utilitarian grounds: even if transparency harmed companies, the advantage to public health would outweigh this. But this could be countered by arguing the reverse. As these concerns are reasonable, complete transparency is morally problematic under the current economic system. As such, what would seem to be needed is an approach that protects the public while also protecting the legitimate interests of companies.

As Seife noted in his article, the information the FDA has kept from the public includes data about harmful side-effects and concerns about the efficacy of products. This information has been redacted or withheld based on the harm that would be done to the company if the truth were known. While it is true that releasing such information could harm a company’s profit, this is not a morally acceptable reason. After all, the mission of the FDA is to protect public health; protecting private profit at the expense of public health is a violation of this mission.

While a company or individual does have a right to keep certain information private, this right does not extend to concealing danger to others. To use an analogy, while I do have the right to keep my medical records private, I do not have the right to keep it a secret if I were infected with Ebola. To use another analogy, while a company would have a right to keep its manufacturing process for snacks secret, it has no right to keep secret the fact that the main ingredient is rats. The public does not have a right to know their trade secrets; but they do have a right to know if the snacks contain rats. Likewise, while the public does not have a right to know the legitimate trade secrets of a drug company, they do have the right to know the side-effects and efficacy of the drugs they take. As such, the FDA can fulfil its proper mission of protecting public health while also protecting legitimate trade secrets. Companies that want to profit on concealing data from the public with FDA collusion might be dismayed by this, but they have no moral right to expect this—especially when they can still make massive profits by making safe and efficacious drugs.

Considering the actions of the current administration, it is terrifying to consider how much worse things could become. Information about side-effects and ineffective drugs have been concealed in the past, but Trump and Musk are dedicated to dismantling the federal government, including the FDA. In the best of times, it did not protect us very well and it is reasonable to think it will become much worse. While it is always wise to be cautious about drugs and procedures, it would be prudent to be extremely cautious about forthcoming FDA approvals.

Joyce Short, a victim of deception,

Joyce Short, a victim of deception,  For those not familiar with

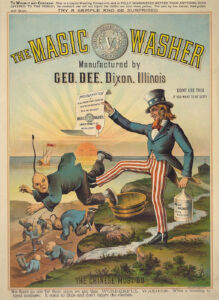

For those not familiar with  While the United States has the best health care money can buy, many Americans cannot afford it. Many Americans are underinsured or not insured and even the insured might face denial of coverage. Americans, as their response to the execution of a health care CEO, are aware of this. Most politicians, with the exception of people like Bernie Sanders, have put their faith in the fact that people forget quickly and have done nothing to address this problem.

While the United States has the best health care money can buy, many Americans cannot afford it. Many Americans are underinsured or not insured and even the insured might face denial of coverage. Americans, as their response to the execution of a health care CEO, are aware of this. Most politicians, with the exception of people like Bernie Sanders, have put their faith in the fact that people forget quickly and have done nothing to address this problem. Early immigration laws, such as the

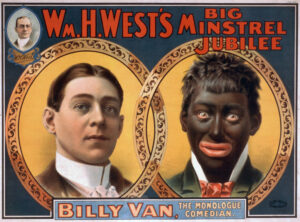

Early immigration laws, such as the  While it can be argued that “toxic masculinity” is useful, I still feel a bit uncomfortable about the phrase. While it would be natural to accuse me of fearing an attack on my maleness, my concern is a pragmatic one about the consequences of the term. Which, from a utilitarian standpoint, also makes it a moral one.

While it can be argued that “toxic masculinity” is useful, I still feel a bit uncomfortable about the phrase. While it would be natural to accuse me of fearing an attack on my maleness, my concern is a pragmatic one about the consequences of the term. Which, from a utilitarian standpoint, also makes it a moral one. While toxic masculinity faced some criticism, it seems to have emerged victorious. While the term is not used as often as it was, I am still somewhat uncomfortable with it. This discomfort is not because I am a man. Unlike more fragile “men”, I am not threatened by criticism. I can distinguish between criticisms of bad behavior by men and the rare attacks on men simply for being men. My slight discomfort arises two sources. The first is based in ethics and the second arises from pragmatic considerations. I will look at the first in this essay and the second in the following essay.

While toxic masculinity faced some criticism, it seems to have emerged victorious. While the term is not used as often as it was, I am still somewhat uncomfortable with it. This discomfort is not because I am a man. Unlike more fragile “men”, I am not threatened by criticism. I can distinguish between criticisms of bad behavior by men and the rare attacks on men simply for being men. My slight discomfort arises two sources. The first is based in ethics and the second arises from pragmatic considerations. I will look at the first in this essay and the second in the following essay. Mark Zuckerberg’s recent crisis of masculinity

Mark Zuckerberg’s recent crisis of masculinity There have been a series of

There have been a series of  When politicians shut down the federal government, some federal workers are ordered to work without pay. To illustrate, TSA and Coast Guard personnel are often ordered to keep working even when their pay is frozen. This raises the moral question of whether it is ethical to compel federal workers to work without pay. The ethics of the matter are distinct from the legality of unpaid labor. That is a matter for the courts to sort out based on what they think the laws say.

When politicians shut down the federal government, some federal workers are ordered to work without pay. To illustrate, TSA and Coast Guard personnel are often ordered to keep working even when their pay is frozen. This raises the moral question of whether it is ethical to compel federal workers to work without pay. The ethics of the matter are distinct from the legality of unpaid labor. That is a matter for the courts to sort out based on what they think the laws say.